AI Brain Fry

You didn't get your time back. You got a faster treadmill.

Last Friday afternoon, I hit a wall.

I’d spent the day deep in AI-assisted research: a Charter Forum session with four companies comparing their AI fluency frameworks, a re-read of a new BCG paper on cognitive overload, fresh Atlassian research, and a genuinely mind-bending piece by SaaStr’s CEO on what it’s actually like to manage AI agents. I was learning fast and loving it.

Then somewhere around 2pm, I noticed I’d stopped reading carefully. I was generating output, skimming AI responses, skipping mistakes and AI-generated slop that I’d have caught an hour earlier. My RAM was full. I closed the laptop and went for a run.

Turns out, I’m a data point: I’m experiencing “AI brain fry.”

As Breakthru’s Melissa Painter told me: “We make a lot of errors when we move too fast and when we look for shortcuts. And technology is constantly promising instant frictionless shortcuts.”

To get deeper, I called up Gabriella Rosen Kellerman co-author of a new study published in HBS on AI Brain Fry to discuss the implications.

The oversight trap

Kellerman and her colleagues at BCG1 describe AI brain fry as an acute mental fatigue from marshaling cognitive oversight beyond capacity. AI brain fry generates a 39% increase in major errors: not typos, but safety-critical and outcome-altering mistakes. Intent to quit also rises 39% (from 25% to 34%).

This form of cognitive overload is already affecting 14% of U.S. workers, so this isn’t a fringe problem. Making it even more acute for busy executives: the workers most at risk aren’t the reluctant adopters, they’re your AI champions. They’re the most active AI users, who are 40% more likely to be “critical to retain” according to Cisco.

Kellerman points out that burnout and AI brain fry are distinct risks. Burnout is driven by physical and emotional distress. What her team found was a new and more acute phenomenon: cognitive overload from “marshalling attention, working memory, and executive control” beyond your capacity.

SaaStr CEO Jason Lemkin, who has moved 60% of his sales function to AI agents, put a sharp point on it. Managing an AI agent requires about 3 to 4 hours per week, essentially identical to managing a human direct report. The difference is that 4 hours spent managing a human yields roughly 40 hours of work. Four hours managing an AI agent yields 168 hours of output — assuming oversight never sleeps.

Work didn’t get lighter, the treadmill just got faster. The result? Top talent starts looking for better options, or starts to break. As Kellerman noted: “I’ve heard about a lot of turnover in that [heavy user] population, and questions from clients: how do I retain top AI talent?”

Gabriella Rosen Kellerman

More agents, more problems

The answer is not “Pile on more agents.”

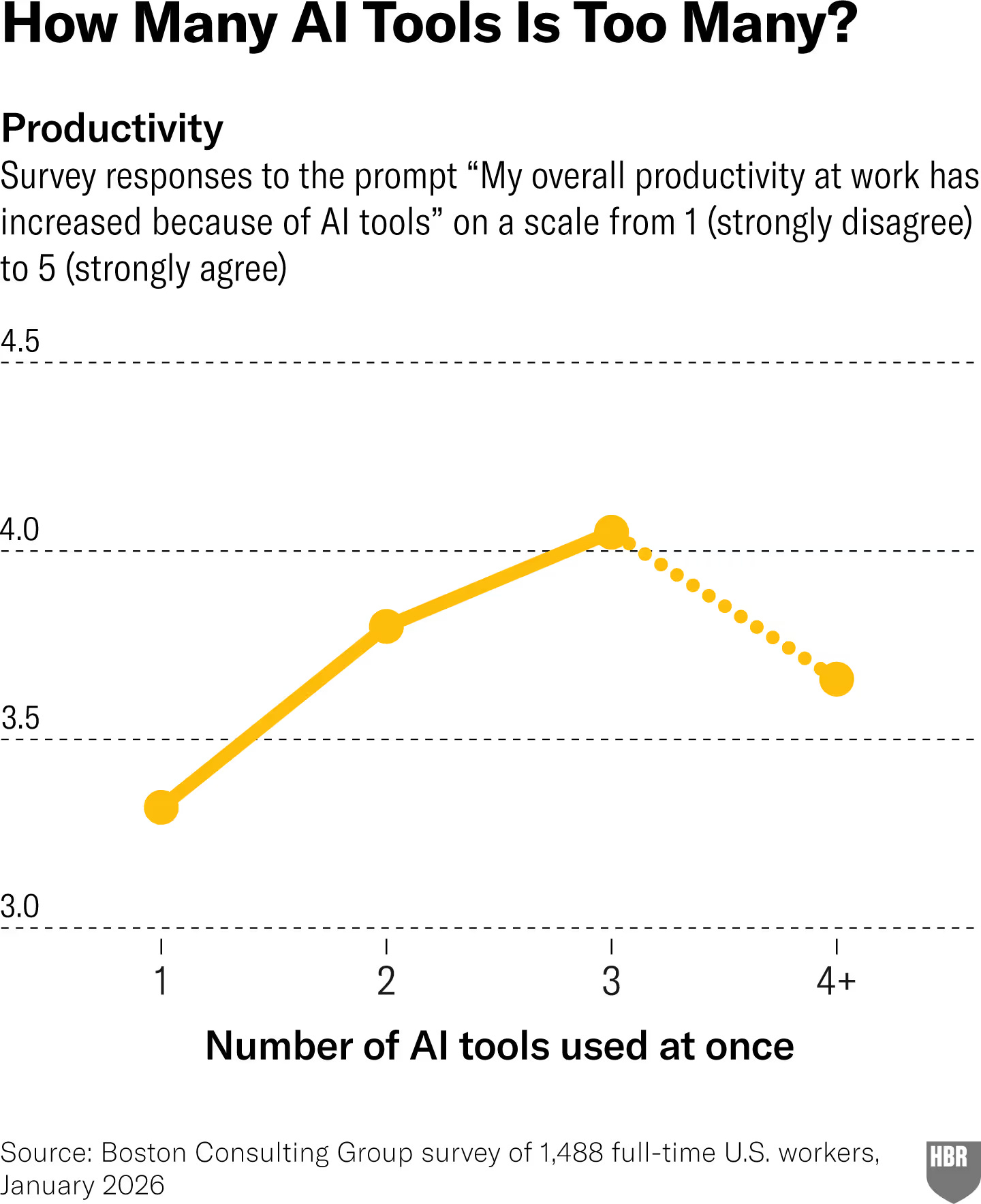

If your engineers are telling you they’re leveraging 5, 10 or more agents, their quality is likely taking a nosedive (and they might be using stimulants to keep up). Kellerman and team’s research found that productivity actually starts to drop after working with 3 or more AI tools simultaneously.

Source: HBR 2026

Anthropic’s AI Fluency Index surfaces another trap: the more polished the output, the less likely it was that people reviewed the output for accuracy. When outputs look done, scrutiny drops: Reasoning evaluation falls by 3 percentage points, and identification of missing context drops by more than 5 points. The better the output looks, the less likely you are to catch what’s wrong with it.

Combine fatigue, too many tools, and polished output, and you get a compounding failure mode: overloaded workers producing an increasing volume of AI-assisted outputs while their capacity to evaluate quality declines. The potential for client and PR disasters, customer support debacles, and far worse (increased litigation, injuries, false arrests, even suicide) is real, and growing.

Burnout was already here

The mental fog of AI brain fry is distinct from burnout, and the populations don’t fully overlap. That just means employers are dealing with more issues as AI expands, not fewer.

Kelly Monahan’s research at Upwork last year found top AI users were twice as likely to quit and showed 88% higher burnout rates. Anthropic found many of the same issues among their own engineers.

Want to make it better? BCG’s study found that workers who use AI to eliminate repetitive tasks see burnout scores drop by 15%. The problem is that toil reduction isn’t what most organizations are actually deploying AI for.

As Ben Ostrowski of Atlassian put it: “The early AI ROI market was full of ‘saved 30 minutes’ stats. Most of the time, that was just reinvested back into admin tasks or correcting AI output.”

Recently, ActivTrak found that time on email, messaging, and admin tools more than doubled while time for focused, uninterrupted work (the kind required for complex thinking and strategy) fell 9% among AI users.

Which means BCG’s finding — that toil reduction actually lowers burnout — is being undermined at the organizational level before it has a chance to work. The time AI frees up is being immediately refilled with more administrivia.

Resilience is not a strategy

Kellerman is emphatic that this is a solvable problem, but only if organizations treat it as a design challenge rather than an individual resilience issue.

Engaged managers. Workers whose managers actively answer AI-related questions and treat adoption as a collective effort experience 15% lower mental fatigue. Conversely, workers left to figure out tools alone face what Kellerman calls the “AI orphan tax:” a 5% fatigue premium for going it alone.

“Being there to help humanize the experience of work, to help make it feel like a collective effort — that’s a huge part of what managers should be doing right now,” Kellerman told me.

Teams at the center. The research finds that integrated AI workflows at the team level reduce fatigue, while high variation in team usage increases it. Peer pressure to adopt AI — the implicit competitive dynamic of everyone racing to demonstrate their individual fluency — shows higher fatigue scores than teams working toward shared goals.

Three of the four companies I spoke with in my Charter Forum session had already figured this out: they’d shifted their primary AI adoption metric from individual usage rates to team-level integration. When one strong “builder” on a team embeds AI into shared workflows, the whole team benefits without everyone suffering the overhead of independent experimentation.

“It’s not like an organization is explicitly saying, ‘Your workload’s going to increase because of AI,’” she noted. “This is more about the implicit messages coming through.”

Increase autonomy. Workers who feel AI is expanding their sphere of accountability show 12% more mental fatigue than those who experience it as a liberating tool. Same technology, different framing, measurable difference in cognitive strain.

Build breaks. Melissa Painter, founder of Breakthru and a longtime researcher on cognition and movement, pushes this further. Two minutes of movement improves working memory, attention, and executive function for up to two hours. Microbreaks have been shown to reduce fatigue while preserving high levels of cognitive performance.

Yet Painter is seeing something new in her own behavioral data: users are increasingly clicking “surprise me” when choosing break types because decision fatigue is so high they can’t even select what kind of rest they need. That’s new in just the last few months.

“We need breaks because we designed a workday that’s too busy,” Painter told me. “And we designed a workday that’s too busy because our tech enabled it. Now we’re adding more tech and wondering why people are burning out.”

Painter’s deeper point is about what’s at stake when cognitive labor gets offloaded too completely. Memory is about synthesis, not just storage: when you skip the friction of processing information yourself, you lose the learning. “The only way to listen to your own body is to listen to your own body,” she says.

Which explains why I’m back to taking notes on paper.

Five things to do this week

Redefine span of control to include agents. Norms exist for how many human direct reports a manager can effectively support. The same logic applies to AI agent oversight: adverse effects begin to appear at three simultaneous agents. Map your team’s current oversight load before adding more.

Be explicit about the workload deal. Don’t let “AI will make you more productive” land as “you’re now accountable for more” without also alleviating toil. Put resources behind the hard work of toil reduction: mapping the often-invisible flows of work and reducing the administrative burden.

Invest manager time in AI questions. Helping team members navigate what to use, how, and when is now core management work. The 15% reduction in fatigue from supportive managers is a measurable retention protection for your best adopters. Start by having team discussions: leverage Atlassian’s AI working agreements.

Invest time in team-based learning. Barb Cadigan, chief people officer at Affirm, shared at Charter’s AI summit that adopting AI agents in engineering required a similar collective approach. Over 900 engineers set aside everything outside hiring and urgent bug fixes for a week to experiment and learn together.

Build breaks into AI-intensive workflows. We need to build cognitive infrastructure. Two minutes of movement before switching between complex AI tasks. Hard stops on the number of agents anyone is expected to run. Not just permission but also modeling: leaders themselves need to demonstrate boundaries and take breaks.

If your most capable AI users are the most burned out ones, the problem isn’t their “resilience” — it’s your workflow design, your culture and your leadership.

My Friday fixed itself with a run and a quiet Saturday morning. The research is increasingly clear on what does work: treat human attention as the finite resource it actually is, and design around it accordingly.

The alternatives are increasingly ugly.

Is “brain fry” a familiar feeling? Let me know what you think…and what you plan to do about it!

One more thing…

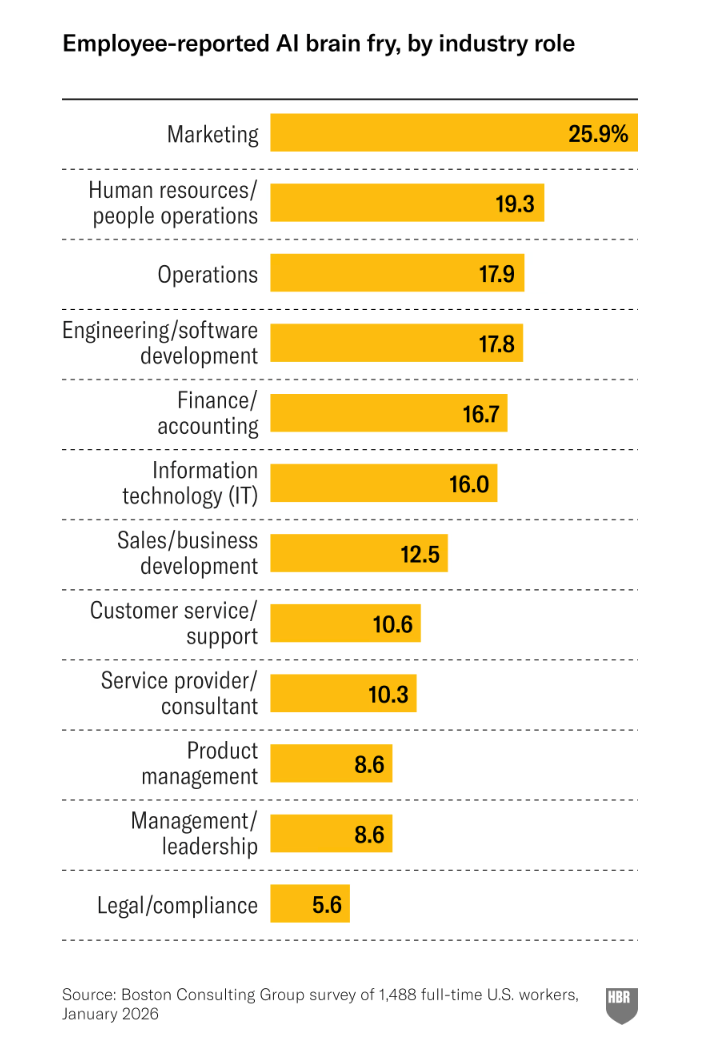

The challenge gets steeper when it’s less clear what “good” looks like. Marketing experiences the highest rate of AI brain fry: nearly 2X the rate in Sales. BCG’s Julie Bedard on Hard Fork shared her take on why: in marketing, quality is more subjective. When is a piece of content good enough to be “done”?

Source: HBR

1 I’m a senior advisor with BCG (Boston Consulting Group), but am not compensated for liking, sharing or talking about their work.

The distinction between burnout and brain fry matters. I run an autonomous AI agent that handles tasks 24/7 and my version of brain fry wasn't from using AI tools directly. It came from being the approval layer for everything the agent produced. 3,000 tasks in a few months, and every meaningful one needed my eyes.

The 39% error increase you cite tracks with what I saw. When I was reviewing product number 14 at 11pm I wasn't making good decisions anymore. Ended up building a wellbeing system that throttles output to match my actual capacity (wrote about it here https://thoughts.jock.pl/p/ai-productivity-paradox-wellbeing-agent-age-2026).

Still figuring out how to match human capacity to agent output speed.